Arm’s new mobile chips: Lumex, Mali G1-Ultra, and the push to put AI on your phone

Meta: Arm has just unwrapped a major refresh of its mobile IP: a new Lumex family of mobile compute designs, a flagship Mali G1-Ultra GPU with ray-tracing and neural features, and a platform-first approach that stitches CPUs, GPUs and neural accelerators together for on-device AI. These changes mark a clear pivot — Arm wants smartphones, wearables and thin clients to run much bigger AI workloads locally, while also shortening time-to-market for silicon partners. ReutersArm Newsroom

Smartphones are about to look a lot more like tiny AI datacenters — but tuned for battery life, intermittent connectivity, and the constraints of a pocket. Arm’s latest announcements (branded under names like Lumex for mobile compute and a refreshed Mali GPU family including the Mali G1-Ultra) reveal a strategic shift: instead of selling isolated CPU cores and GPU blocks, Arm is delivering integrated, AI-first compute subsystems and richer GPU neural features that let device makers run larger, more capable models on device. That means better real-time language features, smarter camera enhancements, on-device assistants that don’t always phone home, and console-level graphics with lower power drain. ReutersArm Newsroom

In this article I’ll unpack what Arm announced, why it matters for handset makers and users, the tradeoffs involved, and how the ecosystem is likely to respond over the next 12–24 months.

What Arm actually announced (the headlines)

-

Lumex compute designs — Arm introduced a new family of mobile compute designs positioned specifically for on-device AI across phones, wearables and thin clients. Lumex is framed as a platform: a collection of CPU, GPU, NPU and interconnect building blocks packaged so SoC designers can ship full systems more quickly. The pitch is time-to-market and AI readiness. ReutersSilicon Republic

-

Mali G1-Ultra GPU — a new flagship Mali GPU that Arm says brings desktop-quality ray tracing to mobile via a new Ray Tracing Unit v2 (RTUv2), and adds dedicated neural acceleration on GPU paths in future variants. Arm claims significant uplifts in ray-tracing throughput and raw graphics performance compared with prior generations. Arm Newsroom+1

-

Platform centricity: Arm Compute Subsystems (CSS) — Arm is leaning harder on preintegrated subsystems rather than just IP blocks: CPU clusters, memory controllers, GPU/NPU combos and software stacks packaged to simplify SoC development. The CSS approach shortens design complexity and gets partners to market faster with validated building blocks. Arm Newsroom

-

AI everywhere — from wearables to flagship phones — Arm released variants covering low-power wearables to high-performance mobile, signaling that the company wants its designs to scale across the device spectrum so AI features can be ubiquitous. Arm’s messaging stresses on-device inference and user privacy, along with improved battery/perf tradeoffs. Reuters

Those are the structural announcements. Now let’s dig into what this means technically and practically.

Why “Lumex” and integrated subsystems matter

Historically, Arm’s business model focused on licensing CPU and GPU core designs to silicon vendors (Qualcomm, MediaTek, Samsung, Apple historically used/customized Arm architectures). But the market has evolved: modern SoCs are dominated by custom integrations, NPUs, ISP pipelines, modem IP and software stacks. Device makers don’t want only a CPU core — they want a complete, validated foundation that reduces integration risk.

The Lumex and CSS messaging is Arm moving up the stack: instead of “here’s a core, go build,” Arm offers a pre-validated subsystem that bundles CPU clusters, GPU, memory interfaces and neural acceleration plumbing — plus software tools to support on-device ML workloads. That reduces engineering time and chip risk for silicon houses that can’t or don’t want to do every custom piece themselves. For markets like China — where many OEMs build in-house chips or contract TSMC partners — these subsystems accelerate iteration, which is critical in the fast cadence of mobile. Arm NewsroomSilicon Republic

For developers and users this should improve consistency: cameras that use the same neural pipelines across devices, AI assistants with comparable latency profiles, and gaming experiences that are more predictable across SoCs that adopt the Lumex approach.

Mali G1-Ultra: ray tracing + neural acceleration = console-level mobile graphics?

The Mali G1-Ultra announcement is notable for two technical pushes:

-

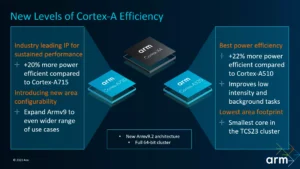

Ray tracing v2 (RTUv2): Arm claims a ~2x uplift in ray-tracing performance over the prior generation thanks to architectural changes. That opens the door for more realistic lighting, reflections and shadows in mobile titles — at a thermal/power envelope that Arm says is suitable for high-end phones. Early Arm material promotes double-digit percentage FPS gains in popular titles and a 20% improvement in raw graphics throughput in key benchmarks. Arm NewsroomNotebookcheck

-

Neural features on the GPU: Arm is embedding neural acceleration tightly with the GPU pipeline (and even planning dedicated neural accelerators on GPU die paths in coming products). The objective is unified, shader-friendly inference: computer vision tasks, dynamic upscaling, style transforms, and even parts of game AI could run on the GPU efficiently. This helps avoid splintering workloads between NPU and GPU and simplifies developer tooling. Arm Newsroom+1

Practically, that combination does two things. First, it raises the ceiling for mobile graphics fidelity — think higher quality lighting and reflections in graphically rich mobile titles, closer to a low-power console experience. Second, by enabling neural ops on the GPU, Arm aims to keep heavy vision and generative tasks local and performant (e.g., on-device photo enhancement, live video effects, or parts of local LLM inference pipelines).

On-device AI: latency, privacy and battery tradeoffs

Putting larger models on phones is a clear trend: lower latency, privacy (data stays local), and offline reliability are powerful selling points. But there are tradeoffs:

-

Thermals & battery: Phone designs are thermally constrained. Running sustained inference on large models still consumes noticeable power and generates heat. Arm’s approach tries to mitigate this with specialized blocks, efficiency improvements, and workload partitioning — putting the right op on the right engine (CPU for control, GPU for parallel compute, NPU for matrix ops). But sustained, heavy workloads (e.g., long generative sessions) will still demand careful system-level thermal management. Arm’s performance gains are promising, but OEMs will still juggle clocks, power budgets and cooling. ReutersArm Newsroom

-

Model size and quantization: Running large language models locally requires clever quantization and compiler toolchains. Arm’s subsystem story includes attention to ML compiler stacks and runtime support — a necessary step if Lumex devices are to support models that previously required cloud horsepower. Expect device makers to ship quantized, distillation-style models tuned for the hardware, not raw huge models. Arm Newsroom

-

Software stack & developer tools: Hardware without software is half a solution. Arm is packaging software toolchains and runtimes so developers can map neural networks efficiently to the hardware. This is essential: model maintainers need easy pipelines to convert and optimize models (e.g., quantize BFloat/FP16 to int8/int4), profile them, and deploy with confidence. CSS includes these higher-level capabilities as part of the pitch. Arm Newsroom

Who benefits, who competes, and who might worry

Winners:

-

Mid-tier OEMs and regional SoC designers — companies that don’t have the R&D budget to design entire custom ecosystems benefit most from pre-integrated subsystems. It lowers the bar to ship AI-capable devices quickly. Silicon Republic

-

App developers — a more consistent set of hardware building blocks and runtimes means less fragmentation. Developers can optimize once for a Lumex CSS profile and reach a broader set of devices with predictable performance.

-

Users — potentially better offline AI capabilities (on-device assistants, privacy-focused features) and improved mobile gaming visuals.

Potentially worried parties:

-

Traditional Arm licensees with deep silicon teams (e.g., Qualcomm, Apple) — these players have been differentiating with custom CPU/GPU/NPU integrations and unique software stacks. Arm offering richer preintegrated subsystems could be seen as encroaching on the value some licensees capture via vertical integration. That said, major players often prefer full control, so Arm’s subsystems will likely appeal more to OEMs and regional chipmakers than to the established custom silicon houses. The Guardian

-

Cloud providers — if more inference shifts to devices, certain cloud inference workloads decline. But the reality is hybrid: devices will do latency-sensitive tasks locally while cloud remains essential for large training and some large-context inference use cases.

Realistic rollout timeline and ecosystem signals

Arm’s announcements are the blueprints — they don’t equal immediate device availability. Production timelines depend on TSMC/other foundry schedules, OEM adoption, and the SoC vendors’ roadmap. Historically, Arm core announcements translate into consumer devices 6–18 months later (depending on whether a partner is integrating quickly or doing deeper customization). For example, Arm’s messaging suggests TSMC 3nm process availability will be leveraged for high-end Lumex designs; supply and yields will influence final shipping dates. ReutersNotebookcheck

Signals to watch in the coming months:

-

Dimensity / Snapdragon / in-house SoC leaks showing Lumex cores or Mali G1-Ultra blocks in SoC listings (benchmarks, Geekbench, GFXBench).

-

OEM announcements from Chinese brands that favor Arm’s subsystems for faster iteration.

-

SDK and runtime releases from Arm enabling model conversion and profiling (a sign that software maturity is catching up to the hardware story). Arm Newsroom

Use cases that will change fastest

-

Camera and imaging — local, neural image processing pipelines for real-time HDR, denoising, and style transfers will be faster and more power efficient when mapped to GPU/NPU hybrids. Expect improved computational photography that runs quickly on device. Arm Newsroom

-

On-device assistants & LLM features — short-context LLMs (for assistant replies, summarization, and query rewriting) can run locally with low latency. Offline transcription + local models for privacy-sensitive tasks becomes more feasible. But for very large models or multi-user cloud services, cloud will remain necessary. Reuters

-

Gaming — ray-traced lighting and neural upscaling will narrow the visual gap with consoles and PCs. Mobile game developers will experiment with higher fidelity assets and dynamic lighting schemes. Arm Newsroom

-

AR/VR & wearables — efficiency gains at the low end of the Lumex family make more capable ML pipelines feasible in headsets and watches (e.g., better hand tracking and real-time scene understanding). Reuters

Open questions & risks

-

How big will on-device models actually be? There’s a layering: tiny prompt processors and task-specific models are trivially portable, but the largest models will still need cloud backing. The industry’s work on model compression, distillation and sparse architectures will influence how much intelligence can be shifted to phones.

-

Fragmentation vs. standardization: Even if Arm offers CSS, vendors may customize, adding fragmentation back in. The net benefit depends on how widely the Lumex profiles are adopted and whether the software stack becomes ubiquitous.

-

Market dynamics with Arm making its own chips: Press reports (and earlier leaks) indicate Arm has contemplated building its own chips or partnering closely with hyperscalers. If Arm starts producing its own silicon (or tightly partnered reference designs) it could trigger strategic tension with existing licensees. That’s a business risk Arm will need to manage carefully. The GuardianSemiWiki

Bottom line: incremental revolution, not magic overnight

Arm’s new mobile chip strategy is a decisive, pragmatic move: push AI to the device with a platform mindset. The technical pieces — better GPUs with ray tracing, neural features on the GPU, integrated compute subsystems — are real improvements that will enable new user experiences. But the outcomes will be evolutionary rather than instant revolutions:

-

Devices will get steadily smarter and more capable offline.

-

Mobile gaming and computational photography will be immediate beneficiaries.

-

Large generative workloads will remain a hybrid of device + cloud for the foreseeable future.

For consumers this promises faster, more private AI features and more realistic mobile graphics. For OEMs and SoC designers it shortens engineering cycles and lowers integration risk. For the broader industry, it’s another step toward shifting the compute continuum — from cloud-first to cloud-augmented, device-centric AI.

What to watch next (quick checklist)

-

Official SoC announcements from Qualcomm, MediaTek, Xiaomi and other OEMs referencing Lumex, Mali G1-Ultra or CSS.

-

Early performance and power-consumption benchmarks (real-world battery tests are the decisive metric).

-

Arm’s software toolchain releases for model conversion, profiling and deployment.

-

Evidence of wider adoption in wearables and AR headsets — an indicator the low-power Lumex variants are viable.

-

Any strategic moves from Arm toward making its own chips or deep partnerships that could reshape licensing dynamics. NotebookcheckThe Guardian

Sources & further reading

-

Reuters: Arm launches next-generation mobile chip designs branded Lumex, aimed at on-device AI. Reuters

-

Arm Newsroom / Blog: Mali G1-Ultra GPU announcement and details on RTUv2 and neural features. Arm Newsroom+1

-

Android Authority deep dive on Arm’s new C1 and G1 cores and the company’s AI focus. Android Authority

-

Notebookcheck coverage of Mali G1-Ultra performance claims and early figures. Notebookcheck

-

SiliconRepublic and other trade reporting on Lumex and Arm’s platform pivot. Silicon Republic

For quick updates, follow our whatsapp channel –https://whatsapp.com/channel/0029VbAabEC11ulGy0ZwRi3j

https://bitsofall.com/https-yourblog-com-deepseek-v3-open-champion-for-code-vs-gpt4-llama3-1/

https://bitsofall.com/alibaba-releasing-the-new-qwen-model-to-improve-ai-transcription-tools/

AI Data Center Water Consumption: Balancing Growth, Sustainability, and Innovation